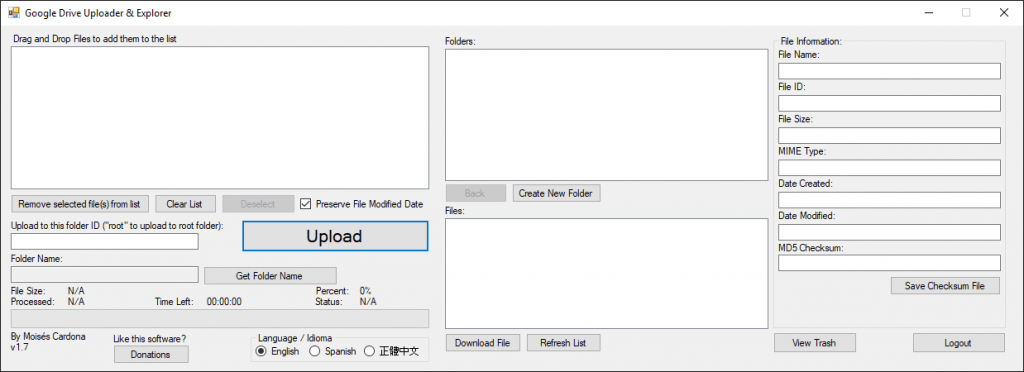

GitHub Commits I’ve made today to my Google Drive tool, Google Drive Uploader & Explorer (6/7/2017)

Hi everyone,

In this post, I’m gonna talk about a few changes I’ve made to my software Google Drive Uploader & Explorer which are available right now in GitHub.

Improvements when adding folders to the Upload Queue

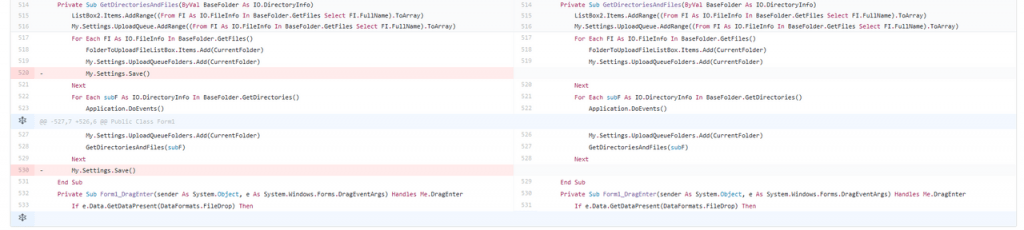

So, the first improvement I did today was to remove some lines of My.Settings.Save() which is the line responsible for saving some software data to the local configuration file available in the AppData\Local folder under the software name.

In version 1.7 which is the version I’m currently developing, I added the ability to upload files to different folders. To do this, you just navigate to the folder you which to upload files to, and then you drag and drop the files into the software. The dropped files are then assigned the folder you are currently viewing so they upload to that folder.

The issue was that when doing this, I was calling continuously My.Settings.Save() and that dropped the software performance especially when dropping a folder full of files, because the configuration file would be written every time a file was added in the loop while it searches a folder for files. Also, I was calling My.Settings.Save()unnecesarily in the function because in the main DragDrop event it was called at the end when all files has been added to the ListBox element, so I’ve removed this and now the software doesn’t hang and is more responsive.

Changes in this commit:

Commit URL: https://github.com/moisespr123/GoogleDriveUploadTool/commit/a4a16ba9eead795a053d25190ec64e3830764632

For Advanced Users: Change the Upload Chunk Size

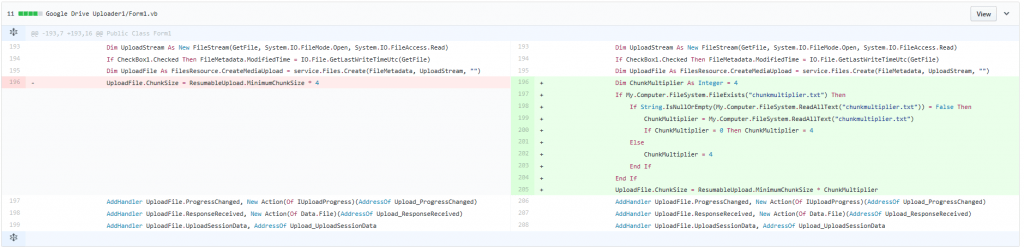

This is especially for those who upload files using a smartphone or tablet hotspot. Sometimes, depending on the place you are, the signal isn’t good, or the speed drops dramatically. Also, if you have a very slow internet connection speed, of 128kb or so, the software may not behave correctly. Why? Because in previous versions, I had hardcoded in the software that the upload chunk size be 1MB, and if your connection is very slow, the software would time out and retry uploading the chunk.

The way that the Google Drive API works is by uploading files in chunks. Their minimum chunk size is 256kb, so in order to accomplish the 1MB chunk size, I multiply the 256kb by 4 to get 1MB. In the .NET API, the minimum chunk size can be called by using ResumableUpload.MinimumChunkSize, and the result is what I multiply by 4.

In the latest commit, this has changed. While the default Chunk Size remains 1MB (256KB x 4 = 1MB), the software will look for a file with the name chunkmultiplier.txt. If this file exists, it will read the value inside the file. With this, the user can effectively bypass the 1MB hardcoded chunk size and avoid having timeout issues due to their connection not uploading 1MB fast enough.

The file must have a number, from 1 onward. This number is multiplied with 256kb, so if the file has the number 8, for example, it means that 256kb will be multiplied by 8, equaling 2MB, so 2MB will be the chunk size.

If the file has 0 as its contents or it is empty, then the software will use the default 4 (1MB chunk size)

Changes in this commit:

Commit URL: https://github.com/moisespr123/GoogleDriveUploadTool/commit/d22f4f9687339f8e79f9004b10589016155a6525

That’s all for the moment!

Remember you can see the software Source Code here.